Ai = Fake Flow State (and it kills your memory)

Do you notice you're getting tired? A different kind of tired?

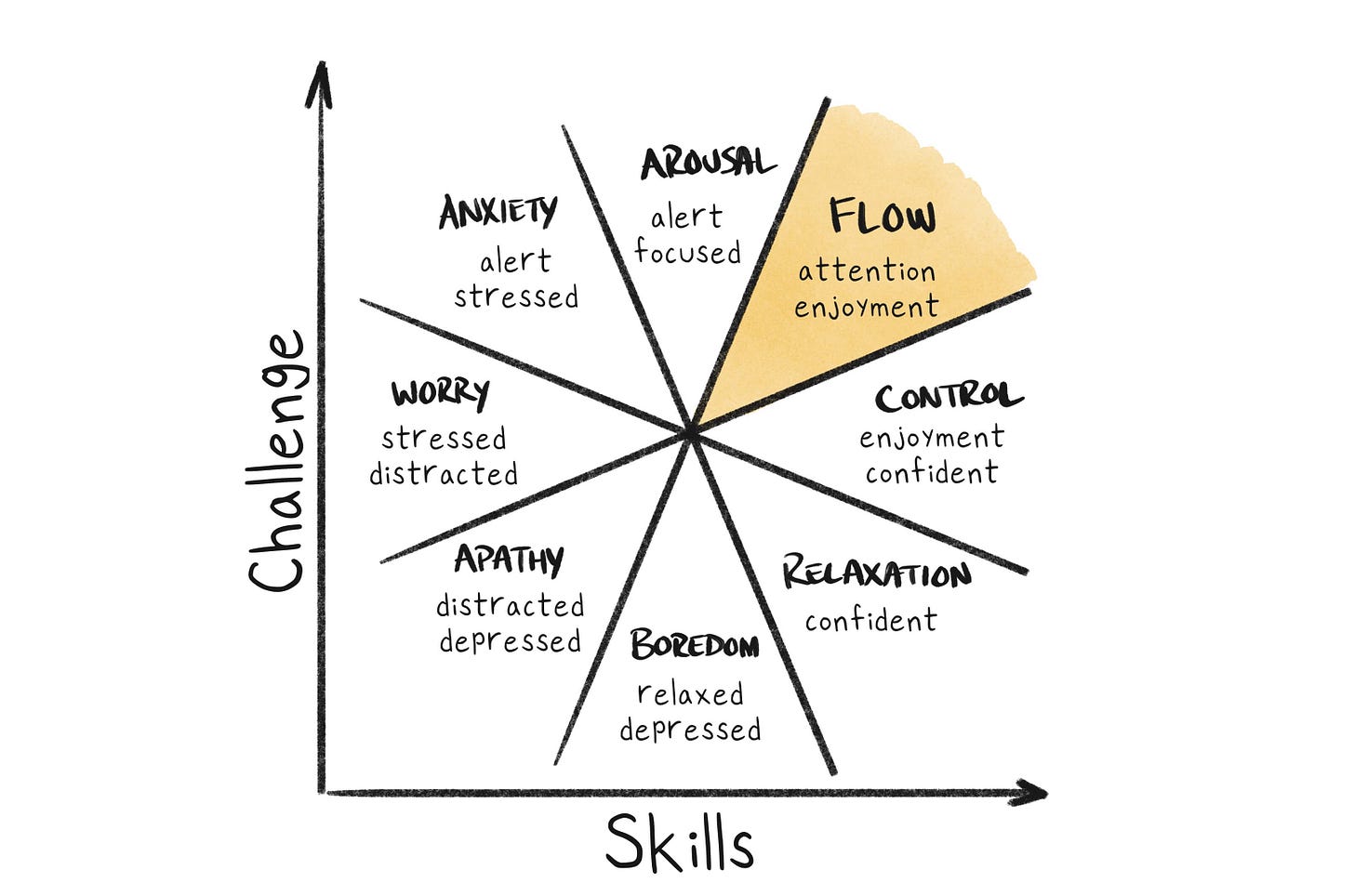

Flow is the ultimate. But what is fake flow?

It’s that feeling like “I was productive for two hours” followed by “why does my brain feel like oatmeal?”

Real flow

You feel sharper as you go.

You emerge tired but satisfied.

You remember what you figured out.

You feel more capable afterward.

Fake flow

You feel immersed, fast, and productive.

You emerge depleted, scattered, or oddly empty.

You produced things, but your own understanding did not deepen much.

You feel dependent on continuing the interaction to keep the state alive.

That does not mean AI is bad. It means the mode matters.

Your brain says, “We were busy.” But another part says, “Did we actually build anything in ourselves?”

That gap feels like fatigue.

My biggest concern is actually memory...

AI fatigue happens when stimulation, speed, and apparent progress outrun memory formation, meaning-making, and cognitive ownership.

It is that the brain can mistake exposure for integration. You touched a lot of ideas, made a lot of moves, saw a lot of language, but very little got deeply encoded. Here is why:

1. Generative friction drops

You no longer have to strain to produce first drafts, first structures, first formulations.

That sounds great, but friction is not only an obstacle. Friction is often the thing that forces selection, sequencing, and recall. When you struggle to retrieve a word, shape an idea, or organize an argument, you are not just producing output. You are strengthening the pathways that make that output yours.

2. Evaluation load rises

Even when AI helps, you now have to constantly judge:

Is this right?

Is this me?

Is this better?

Which of these five versions is strongest?

What should I ask next?

So the burden shifts from generation to curation. That feels lighter in the moment, but it is often more diffuse and mentally leaky.

3. Consolidation gets disrupted

The pace of interaction encourages immediate continuation instead of pause, rehearsal, synthesis, or rest. So instead of:

“read → think → reformulate → remember,”

you get:

“read → react → extend → replace → move on.”

That is a lousy recipe for durable memory.

Why this hurts memory encoding

1. It reduces retrieval effort

One of the best ways to remember something is to pull it out of your own mind before seeing the answer. That is why testing beats rereading. The act of retrieval strengthens the memory trace. But with AI, the answer arrives before retrieval fully happens. Your brain starts to grope for the structure, the phrase, the connection, and then—bam—the model serves a neat version. Convenient, yes. But that convenience can interrupt the exact mental struggle that would have encoded it.

2. It gives you completed language before you build an internal schema

A huge part of learning is not just getting the answer. It is building the mental scaffolding that makes the answer make sense. When AI gives polished summaries too early, you may recognize the logic without constructing it. This creates a dangerous illusion: “I understand this” can actually mean “this sounds familiar and coherent.” Recognition is weak. Reconstruction is strong.

That is the difference between:

“Yes, that makes sense” and

“I could explain this from scratch.”

3. It floods working memory

Working memory is limited. AI outputs often contain many branches, examples, refinements, caveats, and options all at once. Even good output can become too much output.

When that happens, your brain switches from deep encoding to temporary handling:

scanning

sorting

comparing

skimming for relevance

That mode is efficient for navigation, terrible for long-term retention.

The brain is basically saying: “I cannot deeply store ten semi-finished conceptual threads at once, so I will keep them all shallow.” That shallow handling feels like fog later.

4. It breaks the generation effect

People remember information better when they generate it themselves than when they simply read it. AI weakens that by pre-generating the structure. Even when you edit the result, editing is often cognitively thinner than making. That is why someone can spend an hour co-writing with AI and still struggle to remember the key insights the next day. They interacted with the material, but did not fully generate it.

5. It weakens “desirable difficulty”

Some difficulty is productive. Not all struggle is waste. Certain forms of challenge create stronger learning because they force deeper processing.

AI often removes exactly the type of difficulty that produces durable encoding:

finding the right wording

reconstructing the argument

deciding what matters most

carrying a thought long enough to shape it

6. It encourages serial novelty over rehearsal

Memory likes revisiting. AI encourages onward motion.

Every prompt opens another door:

“Now summarize this.”

“Now make it punchier.”

“Now compare it to X.”

“Now turn it into a framework.”

“Now make it a tweet thread.”

That feels productive, but it often prevents the stillness required for consolidation. The brain needs some settling time. Some repetition. Some closure.

Instead, AI creates a conveyor belt of unfinished cognitive meals.

7. It externalizes cognitive state

Normally, when thinking deeply, you keep a fragile internal model active in your mind. That is effortful but important. With AI, more of the state lives outside you:

the thread holds the context

the model remembers the prior steps

the visible text carries the structure

So your brain does less internal holding.

That is useful in the short term. But internal holding is part of what creates durable mental models. If the chat is carrying the burden, your brain can become less practiced at maintaining and updating conceptual structure on its own.

You are not just outsourcing answers. You are outsourcing continuity.

So you feel both overstimulated and underbuilt.

That is why “brain fog” is such an accurate report. The issue is not simply exhaustion. It is high cognitive traffic with low structural residue.

You were mentally active, but too little of that activity hardened into memory.

The deepest danger

The biggest risk is not just temporary fog. It is atrophy of self-generated cognition.

This is a good concise summary of the unexpected downside of outsourcing our cognitive process to a mechanical prosthesis.

If you depend on a crutch to stand and walk, then you are unlikely to regain the strength to stand upright on your own.